Data Strategy & Infrastructure for AI Compliance

Quick answer:

AI data infrastructure compliance means architecting your data pipelines, logging systems, and MLOps tooling so that every AI decision is traceable, auditable, and documented – before a regulator or auditor asks. The EU AI Act makes this mandatory for high-risk AI. Most enterprises don’t have it. The gap is not a model problem. It’s a foundation problem.

Your AI model passed every benchmark. Your data science team is proud of it. Your board has approved the budget. And then legal sends back a four-page list of questions about audit trails, data lineage, and technical documentation – none of which were considered when the pipeline was built.

This is not a hypothetical. It is happening across financial institutions in Zurich and Frankfurt, healthcare networks in the Netherlands and France, and enterprise software vendors across the US and the UK. The model is fine. The infrastructure around it is not.

The EU AI Act – now in active enforcement with the full high-risk compliance deadline arriving August 2, 2026 – requires high-risk AI systems to maintain technical documentation, automatic logging, and data lineage tracking as non-negotiable conditions of deployment. For organizations that built their AI capabilities on ad-hoc MLOps pipelines and duct-taped data flows, that deadline is closer than it looks.

This article is about what “AI-ready” data infrastructure actually means, why poor foundations undermine both AI quality and compliance, and how to start closing the gap – before someone else forces you to.

1. Why Data Infrastructure Is Now a Legal Issue

Most organizations built their AI capabilities the way they built everything else: iteratively, opportunistically, and faster than the governance could follow. A team spins up a proof-of-concept on a notebook. It works. Leadership says ship it. The notebook becomes production.

That approach worked when AI was an experiment. It does not work when AI is making decisions that affect people’s access to credit, employment, healthcare, or essential services – which is exactly the scope the EU AI Act targets.

For high-risk AI systems, the Act requires:

- A risk management system maintained throughout the entire AI lifecycle

- Data governance documentation proving training data is representative and audited

- Technical documentation covering system architecture, training approach, and evaluation results – kept current, not filed away

- Automatic logging of every decision, data input, and model version in production

- Human oversight mechanisms built into the architecture, not retrofitted afterward

Switzerland is not an EU member, but Swiss enterprises serving EU markets are within scope. FINMA has already begun requesting AI governance disclosures aligned with EU standards. For Swiss banks, insurers, and software firms supplying EU clients, these requirements are effectively already in force operationally.

If you have not read our overview of how the EU AI Act classifies risk tiers and what each tier requires, that is a useful starting point before going further – available on our blog

2. What AI Compliance Requires from Your Data Infrastructure

The EU AI Act imposes specific data infrastructure obligations on high-risk AI systems – not as best practices, but as legal prerequisites for deployment:

- Data governance procedures ensuring training, validation, and test datasets are relevant, representative, and bias-audited before development begins

- Data lineage documentation tracing every dataset from source through transformation to model training

- Automatic event logging that records inputs, outputs, and operational events for post-incident investigation

- Technical documentation maintained throughout the entire system lifecycle, not only at launch

- Post-market monitoring plans that detect drift, degradation, and unexpected behavior in production

Each of these is an engineering requirement embedded in your architecture – not a report you write after the fact.

For a broader view of how these requirements fit into the EU AI Act’s overall risk framework, see our article on EU AI Act compliance for enterprises.

3. The Five Layers of AI-Ready Data Infrastructure

“AI-ready infrastructure” has become a marketing phrase. Every cloud vendor and every systems integrator uses it. It means something specific here: infrastructure that can support compliant AI at production scale, not just infrastructure that can run a model.

Layer 1 – Data ingestion and quality control

Every data source feeding your AI system needs a validation gate at ingestion: schema checks, completeness checks, range validation, deduplication, and anomaly detection – automated, not manual. Data that fails validation should be quarantined and flagged, not silently propagated. Most enterprise pipelines today skip this layer entirely.

Layer 2 – Data governance and lineage

This is where compliance is won or lost. Lineage must cover the full chain: from the original source, through every transformation, to the final training dataset. Governance includes access controls, retention policies, consent management (critical for GDPR-compliant AI), and documented approval workflows. We cover this in depth in a dedicated article on data governance and lineage – link to be added.

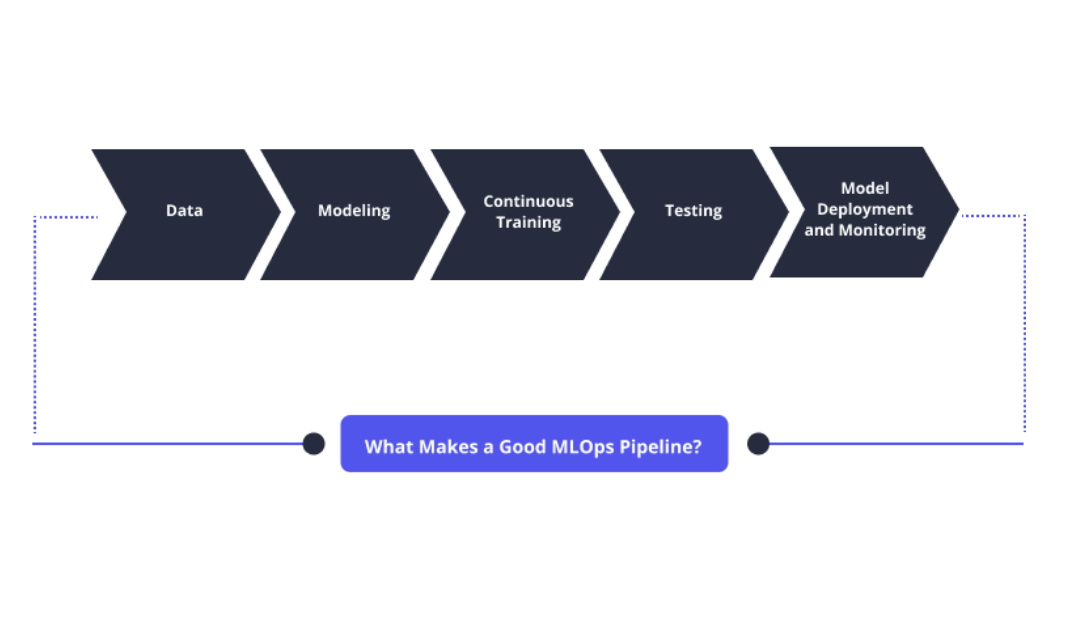

Layer 3 – MLOps and automated documentation

MLOps manages the AI model lifecycle: versioning, training orchestration, experiment tracking, deployment automation, and rollback capability. From a compliance standpoint, it creates the audit trail for model development – which version of data produced which model version, with which hyperparameters, evaluated against which metrics. Without it, that trail exists only in notebooks and memory. We cover MLOps in detail in a dedicated article on MLOps and automated documentation – link to be added.

Layer 4 – Model monitoring and drift detection

A model that performed well at deployment will degrade as real-world conditions shift from its training distribution. Monitoring infrastructure needs to track prediction distribution shifts, feature importance changes, accuracy against labeled ground truth, and fairness metrics across protected groups. This is where compliance becomes a continuous engineering function.

Layer 5 – Audit and compliance reporting

The final layer assembles evidence from the preceding four into conformity assessment documentation, incident reports, bias evaluation results, system change logs, and post-market monitoring summaries. For organizations running multiple high-risk AI systems, this needs automation – generating compliance reports manually for each system at each cycle is not sustainable. We cover the infrastructure implications in a dedicated article on scaling AI infrastructure – link to be added.

4. Where Ad-Hoc MLOps Pipelines Break Down

The term MLOps – machine learning operations – covers the engineering practices that take a model from experiment to reliable production system. Done well, it is what makes the difference between AI that holds up under real conditions and AI that quietly degrades or fails.

Most enterprises do not have mature MLOps. They have a collection of scripts, notebooks, scheduled jobs, and manual handoffs that handle model deployment in practice. It works – until it doesn’t.

The specific failure modes worth understanding:

No Model Versioning

If the system cannot identify which version of a model made a given decision, a compliance audit cannot reconstruct the decision context. Model versioning – with clear registry entries, deployment records, and rollback capability – is the minimum required for EU AI Act audit trails. Most ad-hoc MLOps setups do not have it.

Manual Documentation

Technical documentation for AI systems – architecture, training methodology, evaluation results – needs to be current, not historical. In ad-hoc environments, documentation is typically written once, filed, and never touched again. When the model is retrained on new data, the documentation does not follow. That gap is a compliance liability.

MLOps automation and automated documentation tooling is one of the areas this content series covers in detail. The short version: documentation that has to be written manually will not be updated consistently. Infrastructure that generates documentation automatically – from training runs, evaluation logs, and deployment events – is the only durable solution at scale.

No Drift Monitoring

A model trained in 2024 on one data distribution will behave differently in 2026 on a different one. Without automated monitoring for concept drift and data drift, that degradation is invisible. From a compliance standpoint, deploying a high-risk AI system without drift monitoring is deploying without the risk management system the Act requires.

Fragmented Governance

In many enterprises, multiple teams are using multiple AI tools with no unified governance layer – no central registry of what models are deployed, no access controls on which data those models can access, and no audit trail that spans the organization. Under the EU AI Act, the deploying organization is accountable for what its AI systems do – regardless of which internal team built or deployed them.

For a detailed breakdown of how these fragmented pipelines contribute to production AI failures, our article on why enterprise AI fails in production is worth reading – linked on our blog

5. Where Most Enterprises Are Currently Falling Short

The most common data infrastructure gaps are predictable – not exotic. Across financial services, healthcare, and enterprise software in markets from Switzerland to the Netherlands and the US, the same four problems appear:

- No data lineage tooling – transformation history exists only in code comments and tribal knowledge

- ML experiment tracking limited to notebooks and local files – no reproducibility, no audit trail

- Model performance monitoring that relies on manual review rather than automated alerting

- Compliance documentation written retrospectively, after deployment, rather than maintained throughout development

Data Quality as a Compliance Requirement, Not Just a Performance Metric

Data quality checks need to be logged as part of the pipeline, not just run and forgotten. Results of bias testing need to be retained. Data cleaning decisions need to be recorded with their rationale.

This is where many US-headquartered companies operating in the EU get caught off-guard. US data practices – particularly in fintech and healthcare AI – have been shaped primarily by accuracy and performance benchmarks. EU compliance adds a second dimension: can you demonstrate that the data was appropriate, representative, and scrutinized? Answering that question requires infrastructure that captures the process, not just the outcome.

The Monitoring Gap

Most organizations have application monitoring. Far fewer have AI-specific monitoring: prediction drift tracking, feature importance shifts, and fairness metrics across demographic groups in production. Model degradation gets discovered through customer complaints – not through the proactive monitoring the EU AI Act requires.

Scaling AI Infrastructure Without Breaking Compliance

A fundamental tension exists between rapid AI scaling and regulatory compliance. While moving fast often means treating documentation as an afterthought, compliant scaling introduces intentional friction-such as deployment gates that require complete documentation and lineage metadata before models move to production.

Organizations that succeed in heavily regulated sectors (like BFSI and healthcare) treat compliance infrastructure as a core engineering investment rather than minimizing it as overhead. As AI systems evolve in complexity-from single models to real-time agentic systems-compliance infrastructure must scale concurrently.

For enterprises operating under FINMA guidance: The Swiss Financial Market Supervisory Authority has increasingly aligned its AI governance expectations with EU standards. Banks and insurers in Switzerland deploying AI in credit, risk, or compliance functions should treat EU AI Act data infrastructure requirements as directly applicable – regulatory alignment between Bern and Brussels is advancing rapidly.

6. How to Assess Where You Stand Right Now

Before building anything, you need to know what you have. The assessment is straightforward, though the findings rarely are.

Inventory your AI systems

List every AI system in use – including third-party tools embedded in enterprise software, and any shadow AI tools employees are using outside IT oversight. You cannot classify what you have not found. This is the step most enterprises underestimate, because the actual number of AI systems in use is almost always higher than the number leadership has approved.

Classify by risk level

Apply the EU AI Act’s four-tier risk framework to each system. The classification drives everything else. A customer service chatbot has disclosure obligations. A credit scoring model has documentation, logging, oversight, and audit trail requirements. If you are uncertain about classification, conservative is the right default – misclassifying a high-risk system carries more risk than the cost of additional compliance steps.

Audit your current logging and documentation

For each AI system, ask: Can you reconstruct a specific decision made six months ago? Can you identify which training data version and model version were in use at a specific date? Can you show an auditor the validation steps applied to your training data? If the answer to any of these is no, you have identified where the infrastructure work needs to start.

Map your data lineage coverage

Determine what percentage of your data flows have documented lineage. For most organizations, this is lower than expected – particularly for data that crosses team or system boundaries. Unmapped lineage is undocumented risk.

Action:

If you are in financial services, insurance, or healthcare and are uncertain where your AI systems sit in the compliance landscape, our team at IMT Solutions has worked through this assessment with clients across Switzerland, Germany, France, and beyond. The assessment is the right starting point – contact us at imt-soft.com/en/contact/.

6. Conclusion

The enterprises that navigate AI compliance successfully are not the ones with the most sophisticated models. They are the ones that built the data infrastructure capable of proving those models are trustworthy, traceable, and auditable.

Data governance, MLOps practices, lineage tracking, and production monitoring are not optional additions to an AI program. Under the EU AI Act, for high-risk systems, they are the program.

IMT Solutions helps enterprises across Switzerland, the EU, and the US architect, build, and certify AI data infrastructure that meets both the technical and regulatory bar. Explore our case studies or contact us directly to talk through your specific situation.

FAQ: AI Data Infrastructure & Compliance

What data infrastructure does the EU AI Act require for high-risk AI systems?

High-risk AI systems under the EU AI Act must maintain: data governance procedures ensuring training data is representative and bias-audited; lineage documentation tracing every dataset from source to training; automatic event logging for post-incident investigation; technical documentation throughout the system lifecycle; and post-market monitoring that detects drift and adverse impacts. These are engineering requirements, not paperwork.

What is data lineage and why does it matter for AI compliance?

Data lineage is the ability to trace the full history of a dataset – where it came from, what transformations were applied, when it was last validated, and who authorized its use. Without lineage tracking, organizations cannot answer regulatory questions with evidence – only assertions. Under the EU AI Act, assertions are not sufficient for high-risk AI conformity. Tools like Apache Atlas, DataHub, or OpenLineage address this requirement.

What is MLOps and how does it support AI compliance?

MLOps manages the AI model development lifecycle with reproducibility, versioning, and documentation. It creates an auditable trail: which training data version produced which model version, with which hyperparameters, evaluated against which metrics. Without it, that trail exists in notebooks and memory – neither of which is reproducible or audit-ready. EU AI Act technical documentation

Does Switzerland need to comply with the EU AI Act?

Swiss enterprises are effectively within scope of the EU AI Act if they operate AI systems that affect people in the EU – which covers most Swiss banks, insurers, and technology companies with EU clients. FINMA has begun requesting AI governance disclosures aligned with EU standards, and Swiss national AI regulation is being developed in close alignment with the EU framework. For Swiss organizations serving EU markets, the practical answer is yes.

Where should we start if we have no formal AI data governance today?

Start with an inventory. List every AI system in use, including tools embedded in third-party software and any shadow AI in use by employees. Then classify each system by EU AI Act risk tier. Then audit logging and documentation coverage for your highest-risk systems. The gap between what you find and what the Act requires is your infrastructure roadmap. This is the order that produces the most actionable results the fastest.