EU & Global AI Regulations for Enterprises: Risk Classification & What to Do Next

Most enterprises are still having some version of this conversation internally: “We’ll deal with AI regulation when it’s actually enforced.” That conversation is over.

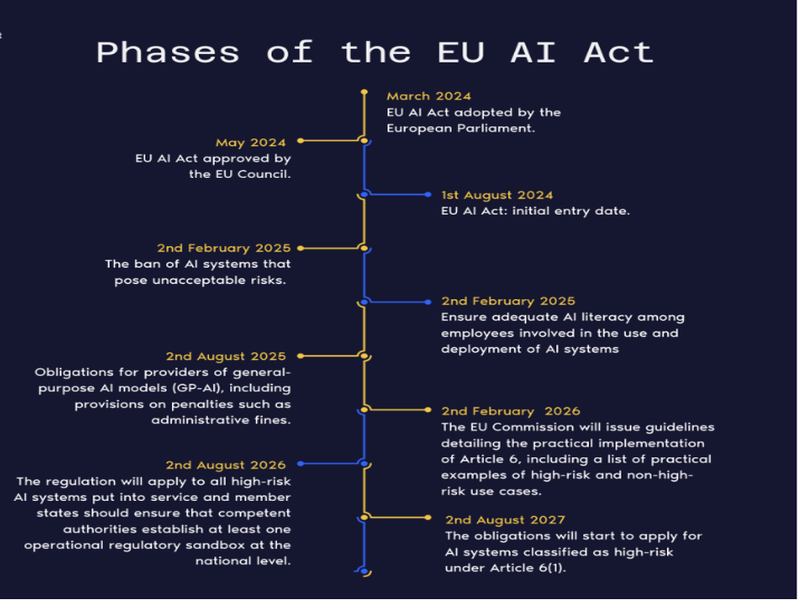

The EU AI Act is not a regulation on the horizon. Prohibited AI practices have been banned since February 2025. Rules for general-purpose AI models took effect in August 2025. And on August 2, 2026, the full weight of high-risk AI requirements comes into force. The fines are not theoretical: up to €35 million or 7% of global annual turnover – a ceiling that exceeds even GDPR.

But the more important point isn’t the fine structure. The Act is a classification system, not a blanket ban. It sorts AI applications into risk levels and makes compliance proportionate to potential harm. The challenge – where most enterprises are currently failing – is knowing which systems sit in the high-risk category and building the right governance around them.

This article maps what matters: the four risk tiers, what each one requires, a practical compliance roadmap, and how global regulations compare. If you’ve already read our piece on Why Enterprise AI Fails in Production, you’ll know that governance gaps are where the problems start. This is the framework that closes them.

1. Who Does the EU AI Act Actually Apply To?

The EU AI Act (Regulation 2024/1689) applies to any organisation that develops, deploys, imports, or distributes AI systems whose outputs affect people in the European Union – regardless of where that organisation is headquartered.

A US fintech whose AI-powered credit tool is used by German customers is in scope. A Swiss bank using AI for loan decisions is in scope. A Vietnamese software firm building AI components for a French enterprise client is in scope. The Act follows the output, not the headquarters.

The Act distinguishes two primary roles with different obligations:

- Providers – organisations that develop or place an AI system on the EU market. They carry the heaviest obligations: conformity assessments, technical documentation, post-market monitoring, incident reporting.

- Deployers – organisations using AI under their own authority, including enterprises using third-party AI tools. Lighter but non-trivial obligations: human oversight, staff training, and in some cases a Fundamental Rights Impact Assessment.

One nuance worth flagging: if you significantly fine-tune an off-the-shelf model – retrain it on your data, change its intended purpose – you may become a provider under the Act, inheriting full provider obligations. Many enterprises building internal tools on foundation models are in this position without realising it.

Switzerland & the EU AI Act

Switzerland is not an EU member, but Swiss enterprises serving EU markets are effectively within scope for their EU-facing AI deployments. KPMG Switzerland confirms the Act applies “to organisations based in Switzerland as far they use data of persons domiciled in the EU.” FINMA has already begun requesting AI governance disclosures aligned with EU standards, and the Swiss Federal Council is developing national AI regulation closely mirroring the EU framework.

2. The Four Risk Tiers – How the EU AI Act Classifies AI

The EU AI Act uses a risk-based approach to classify AI systems into four categories:

Unacceptable risk

AI systems that threaten fundamental rights, such as government-run social scoring systems, are banned outright.

High risk

These include systems used in law enforcement, critical infrastructure, or those handling personal data. Developers must follow strict regulations. This includes implementing a risk management system, conducting risk assessments, and ensuring data governance.

Limited risk

Systems in this category must meet transparency requirements. For example, users must be notified when interacting with AI-generated content, such as chatbots or generative AI models.

Minimal or low risk

These systems have minimal compliance obligations. However, they must follow basic principles of data governance and ethical AI.

This risk-based framework emphasizes AI accountability measures. It encourages businesses to proactively address risks and comply with the requirements set by the Act.

Note:

General Purpose AI (GPAI) models – large language models and foundation models – have their own separate obligations: training data transparency, cybersecurity standards, and for the most powerful models, systemic risk assessments. When a GPAI model is integrated into a high-risk application, the integrating organisation inherits the high-risk classification. Wrapping a public LLM in a credit decision tool does not lower its regulatory category.

3. Prohibited AI – What’s Banned Right Now

As of February 2, 2025, the following AI systems are banned from deployment in the EU – with no compliance pathway and fines up to €35M or 7% of global annual turnover:

- Real-time biometric identification in publicly accessible spaces (narrow law enforcement exceptions apply)

- AI that exploits psychological vulnerabilities – age, disability, financial distress – to manipulate behaviour

- Social scoring systems ranking individuals based on behaviour or personal characteristics

- Biometric categorisation based on political views, religion, or sexual orientation

- Predictive policing based purely on profiling, without individual behavioural data

- Emotion recognition in workplaces and educational institutions (non-safety purposes)

- Untargeted facial image scraping from the internet or CCTV to build recognition databases

Action Required

Before procuring any AI vendor’s product – especially in security, HR, or customer analytics – legal review must happen before technical development or deployment begins. Deploying and then unwinding a prohibited system costs far more than the prior assessment.

4. High-Risk AI – What Compliance Actually Requires

This is where most enterprises get caught off guard. High-risk AI is not limited to facial recognition or autonomous weapons. It covers most AI investment in financial services, HR technology, and healthcare – including systems many organisations are already running.

For each high-risk system, compliance requires – before deployment:

- Risk management system: Maintained throughout the entire AI lifecycle – not a one-time assessment

- Data governance: Training data must be representative, documented, and audited for bias

- Technical documentation: System architecture, training approach, evaluation results – kept current

- Automatic logging: Every decision, data input, and model version in production must be traceable

- Human oversight: A person must be able to override or halt the AI – built into architecture, not retrofitted

- Conformity assessment + EU database registration: Before the system goes live

- AI literacy: All staff operating AI systems must have documented adequate training

Non-compliance with high-risk obligations carries fines up to €15M or 3% of global annual turnover – separate from, and stackable with, GDPR penalties. GDPR compliance does not satisfy the EU AI Act: GDPR governs data processing; the AI Act governs how AI systems behave and are overseen. For high-risk AI that also processes personal data, both frameworks apply simultaneously.

5. Mapping Common AI Use Cases to Risk Levels

Before you build a compliance program, you need to know where your AI systems actually sit. A study of 106 enterprise AI systems found that 40% had unclear risk classifications. That ambiguity is not a defensible position with regulators.

| Use Case | Risk Tier | Key Trigger |

|---|---|---|

| CV screening / hiring AI | High Risk | Employment decisions |

| Credit scoring / loan decisions | High Risk | Financial access decisions |

| AI medical diagnosis support | High Risk | Health & safety impact |

| Fraud detection (flagging only, human review) | Likely Minimal | No direct rights impact if human reviews every flag |

| Use Case | Risk Tier | Key Trigger |

|---|---|---|

| Customer service chatbot | Limited Risk | Transparency obligation – must disclose AI interaction |

| LLM integrated into credit decision workflow | High Risk | Use case inherits high-risk tier regardless of model |

| Internal document summarisation | Minimal Risk | No decisions about people; no sensitive data |

| Employee performance monitoring AI | High Risk | Employment evaluation decisions |

The pattern to watch: LLMs and GenAI tools are not inherently high-risk. But when integrated into high-risk workflows – credit decisions, hiring, medical triage – the integrating organisation inherits the high-risk classification. Our case studies show how this plays out in BFSI and healthcare environments specifically.

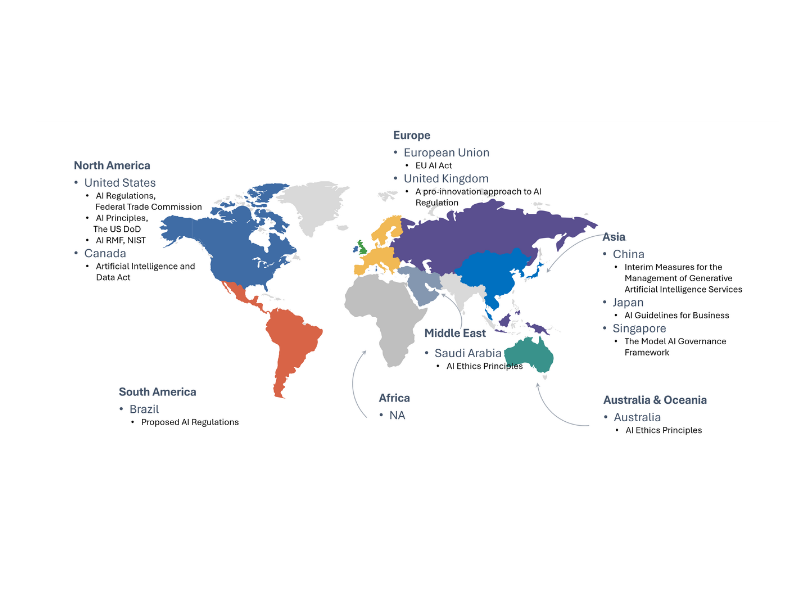

6. Global AI Regulations – Where Other Markets Stand

The EU AI Act is the most comprehensive AI regulation in force – but not the only one. For organisations with multi-jurisdictional deployments, EU compliance is the highest common denominator worth building around.

United States

No federal AI law equivalent to the EU AI Act as of 2026. The Biden Executive Order on AI was substantially rolled back in early 2025. What remains: FDA guidance on AI in medical devices, CFPB rules on AI credit decisions, EEOC guidance on AI hiring, and active state-level legislation in California, Colorado, Illinois, and Texas. Meeting EU AI Act standards largely satisfies existing US sector-specific requirements.

United Kingdom

Deliberately pro-innovation. The UK relies on existing regulators (FCA, CMA, ICO) applying AI principles sector by sector rather than a cross-sector law. UK firms selling AI to EU customers must still comply with the EU AI Act regardless.

China

China has moved fastest outside the EU. Generative AI Regulation (effective August 2023), algorithm transparency requirements, and mandatory government registration for certain systems are already in force. China requires a separate compliance track – EU and Chinese obligations do not transfer between frameworks.

India & Vietnam

India’s Digital Personal Data Protection Act and 2024 AI governance framework take a principles-based approach directionally consistent with EU standards. Vietnam’s AI strategy through 2030 explicitly references international standards including EU frameworks – and for Vietnamese software companies supplying AI components to EU or US clients, alignment with international governance standards is increasingly a market access condition.

The Bottom Line on Global AI Regulation

If your enterprise operates across borders – or if you use AI vendors headquartered outside your jurisdiction – you’re almost certainly subject to multiple overlapping regulatory frameworks. Building compliance around the EU AI Act gives you a strong foundation that transfers well to other regimes. It’s the highest common denominator worth planning for.

7. Enterprise AI Compliance Roadmap

EU AI Act compliance is an engineering project with legal requirements – not a legal project with technical footnotes. It requires your CTO, legal counsel, data teams, and business leads working from the same classification framework.

Step 1: Inventory all AI systems – including third-party tools and shadow AI (GenAI tools in use without IT approval). You cannot classify what you haven’t found.

Step 2: Classify each system by risk tier – apply the EU AI Act framework to every system. For borderline cases, lean conservative. Misclassifying a high-risk system costs far more than a few extra compliance steps.

Step 3: Run conformity assessments for high-risk AI – technical documentation, bias testing, data governance review. Document everything. This needs to be maintained, not filed away.

Step 4: Build human oversight into architecture – high-risk AI decisions must have an override mechanism. This cannot be retrofitted; it needs to be in the design.

Step 5: Establish audit logging – every decision, every data input, every model version in production must be traceable. If you can’t reconstruct why the AI made a specific decision, your audit trail isn’t good enough.

Step 6: Issue and enforce a GenAI usage policy – document which AI tools employees are authorised to use, for what purposes, and with what data. Access controls, not just guidelines.

If you’re in financial services, insurance, or healthcare and want to understand what full compliance looks like in your specific sector, talk to our team. This is exactly the kind of multi-framework challenge we work through with clients.

8. Conclusion

The EU AI Act is not coming – it’s here. The prohibited AI provisions are already enforced. The high-risk deadline is running. And if your enterprise operates across borders, you’re navigating multiple overlapping regulatory frameworks at once.

The organisations that get this right won’t just avoid fines. They’ll build AI systems that are more reliable, more auditable, and more trusted – by regulators, clients, and the markets they operate in. The window to build compliance in rather than retrofit it afterward is narrowing fast.

Navigating the complexities of the EU AI Act can be challenging, but you don’t have to do it alone. IMT Solutions’s expertise in AI regulatory compliance, governance, and certification ensures your AI systems meet the highest standards of safety and ethics.

If you want to take a step further and advance your organization’s AI maturity and compliance readiness, check out our case studies to prepare for emerging AI regulations. Partner with IMT Solutions to ensure your AI systems are compliant, trustworthy, and future-proof. Contact us today to learn how we can support your AI journey.

9. FAQ: EU AI Act Compliance for Enterprises

What is the EU AI Act and who does it apply to?

The EU AI Act (Regulation 2024/1689) is the world’s first comprehensive legal framework for artificial intelligence, entered into force August 1, 2024, with phased enforcement through 2027. It applies to any organisation – regardless of headquarters location – that develops, deploys, imports, or distributes AI systems whose outputs affect people in the EU. This includes US companies with EU customers, Swiss banks using AI for credit decisions, and Asian software vendors supplying AI components to European clients.

What AI systems are prohibited under the EU AI Act?

Since February 2, 2025, the EU AI Act bans: real-time biometric identification in public spaces; social scoring systems; AI exploiting psychological vulnerabilities to manipulate behaviour; biometric categorisation based on political views, religion, or sexual orientation; predictive policing based purely on profiling; emotion recognition in workplaces and educational institutions for non-safety purposes; and untargeted facial image scraping to build recognition databases. Violations carry fines up to €35 million or 7% of global annual turnover.

What makes an AI system ‘high risk’ under the EU AI Act?

An AI system is high risk when used in a domain where errors, bias, or opacity could cause significant harm to health, safety, or fundamental rights. High-risk include credit scoring, loan decisions, employment screening, medical diagnosis support, critical infrastructure management, law enforcement tools, border control, and educational access decisions. Classification is based on use case, not technology – the same model can be minimal risk in one deployment and high risk in another.

How does Switzerland fit into EU AI Act compliance?

Switzerland is not an EU member state, but Swiss enterprises are effectively within scope of the EU AI Act if they deploy AI systems affecting people in the EU – which covers most Swiss banks, insurers, and software companies serving European markets. KPMG Switzerland confirms the Act applies “to organisations based in Switzerland as far they use data of persons domiciled in the EU.” FINMA has already begun requesting AI governance disclosures aligned with EU standards.

Does GDPR compliance satisfy the EU AI Act?

No. GDPR governs how personal data is collected, stored, and processed. The EU AI Act governs how AI systems are designed, make decisions, and are overseen. For high-risk AI systems that also process personal data, both frameworks apply simultaneously. Fines under each are independent and can stack.

How does the EU AI Act affect small businesses and startups?

Recognizing the diverse capacities of organizations, the EU AI Act incorporates proportional requirements to minimize the regulatory burden on small businesses and startups. This approach ensures that while compliance is mandatory, the obligations are scaled according to the size and resources of the organization, thereby supporting innovation and competitiveness among smaller entities (PWC, 2024).