What Is Enterprise AI? Types, Risks, and EU Compliance Explained

Quick answer:

Enterprise artificial intelligence (AI) is the application of AI technologies to address business challenges within an organization. It involves using machine learning, deep learning, natural language processing (NLP), and other AI techniques to automate processes, improve decision-making, and create new products and services.

Somewhere in your organization right now, there is probably an AI system making a decision. Maybe it is scoring credit applications. Maybe it is drafting email responses. Maybe it is flagging unusual transactions before a human ever sees them. Maybe it is doing all three – built by different vendors, deployed by different teams, governed by nobody in particular.

That is the reality of enterprise AI in 2026. It is not one technology. It is a family of very different tools that happen to share the same label, and the difference between them matters more than most organizations realize. The EU AI Act does not regulate “AI” as a category. It regulates what AI does, and what it does to whom. Getting that distinction right is the difference between a compliance-ready deployment and an expensive problem waiting to happen.

1. What Is Enterprise AI?

Enterprise AI is the integration of advanced AI-enabled technologies within large organizations to enhance business functions, from routine tasks like data collection and analysis to more complex operations like automation, customer service, and risk management.

The key distinction from traditional software is how it operates. Standard software follows rules – if this, then that. Enterprise AI learns from data. Show it enough examples of a fraudulent transaction, and it learns to recognize fraud. Show it thousands of customer queries, and it learns to answer them. It does not actually understand anything in a human sense – it finds patterns and makes educated guesses based on what it has seen before.

That distinction is not technical trivia. It is what determines where enterprise AI excels, where it fails, and why governing it requires a different approach than governing conventional software.

The Main Types of Enterprise AI

Enterprise AI is not one technology. It is a family of tools, each built on different principles, suited to different tasks. They are most usefully organized into five capability groups.

Group 1 – AI That Predicts: Predictive Analytics, Machine Learning & Reinforcement Learning

Predictive AI and analytical AI are what most enterprises deployed first, even when they were not calling it “AI.” Predictive AI analyzes historical data to forecast what comes next. Machine learning (ML) models identify patterns across large datasets and apply them to new situations.

Reinforcement learning takes a different approach. Instead of learning from a historical dataset, it learns by doing – taking actions, receiving rewards or penalties, and optimizing its behavior over time. You see it in algorithmic trading strategies that learn from market outcomes and in logistics routing that continuously improves.

In practice: Banks use predictive AI to assess credit risk before a loan is approved. Insurance companies use it to estimate claim probability and set premiums. Retailers use it to forecast demand and prevent stockouts. Anywhere a business needs to make a high-volume decision informed by past data, predictive AI is typically running underneath it.

Group 2 – AI That Creates: Generative AI & NLP

Generative AI produces new content that did not exist before – text, code, images, audio – by learning patterns from vast training data. Natural Language Processing (NLP) gives AI systems the ability to read, interpret, and produce human language. The two are closely linked: large language models like GPT-4 or Claude use NLP to understand prompts and generative capabilities to respond.

In enterprise settings, teams use it for contract review and summarization, financial report drafting, internal knowledge bases, code assistance, and customer communication. Enterprise spending on generative AI reached $37 billion in 2025 – a 3.2x year-over-year increase – with more than half of that going to application-layer tools rather than underlying models.

In practice: Legal teams use generative AI to summarize contracts and flag unusual clauses. Financial analysts use it to draft first-pass reports and earnings summaries. IT teams use AI coding assistants to accelerate development and catch bugs. Any workflow that involves reading, writing, or processing language is a candidate for this type of AI.

Group 3 – AI That Talks: Conversational AI

Conversational AI combines NLP with dialogue management to hold structured conversations with users – across text, voice, or messaging channels. This is the technology powering customer service chatbots, virtual assistants in banking apps, internal HR tools, and automated onboarding flows.

87.2% of contact centers had already adopted AI and machine learning by 2026. The challenge is no longer whether to deploy it – it is how to govern what it says when no human is watching.

In practice: Insurance companies use it to guide customers through claims submissions. Internal HR tools answer employee questions about policies, payroll, or benefits automatically.

Group 4 – AI That Sees: Computer Vision

Computer vision gives machines the ability to interpret visual information – images, video feeds, scanned documents, and real-time camera streams. It converts what it “sees” into structured, actionable output.

In financial services and insurance, the most common enterprise application is document processing: OCR tools. It also powers biometric authentication – the face recognition in your banking app, the fingerprint scanner at the data center entrance. That is where the regulatory line becomes sharp, and where the EU AI Act imposes the toughest restrictions.

In practice: Banks use it to verify identity documents during onboarding. Manufacturers use it on production lines to detect defects that human inspectors would miss at high speed. Any organization handling large volumes of visual or document-based information can typically apply computer vision to speed up and standardize that work.

Group 5 – AI That Acts: Autonomous & Agentic AI

Agentic AI is the newest and most consequential category. It takes goals and acts on them. It plans multi-step workflows, uses tools, accesses external systems, and executes tasks without needing human approval at each step.

In 2025, 44% of companies were either deploying or assessing agentic AI. By early 2026, those experiments had become full production deployment.

In practice: : Financial institutions use agentic AI to execute multi-step compliance workflows. Operations teams use AI agents to monitor systems, detect anomalies, and trigger remediation steps before a human is even alerted.Any complex, multi-system process that currently requires a human to coordinate between tools is a candidate for agentic automation.

2. What Are the Real Risks?

Every AI type described above creates a different failure mode. Understanding them is more useful than a generic warning about “AI risks.”

Predictive AI fails quietly. A credit scoring model or insurance pricing engine can be systematically wrong – encoding historical bias or drifting out of calibration – while producing outputs that look precise and confident. The number is clean; the distortion is invisible. Without active monitoring, no one notices until the pattern shows up in audits or regulatory findings.

Generative AI fails loudly but not always obviously. Hallucination – producing confident, fluent, completely wrong information – is the known failure mode. In a legal document, a financial disclosure, or a client communication, that is not a typo. It is a liability. The other risk is less discussed: fine-tune a large language model on your company’s proprietary data, and you may find yourself legally classified as an AI provider with full regulatory obligations.

Conversational AI fails at the interface. When a chatbot gives a customer wrong information about a loan term or compliance deadline, the customer does not know- and acts on it. In regulated industries, the organization is accountable for what the chatbot said, not just what it intended.

Computer vision fails where accuracy matters most. A misread identity document, a misclassified medical image, a misidentified person – the system does not know it was wrong. The affected individual does, usually after the damage is done.

Agentic AI fails at scale. These systems execute at machine speed. When something goes wrong – and every complex system eventually does – it can go wrong across multiple downstream systems before anyone notices. The accountability question is also unresolved in most organizations: when an AI agent takes an unauthorized action, who is responsible?

3. Can AI Replace Humans? What It Does Better, and Where It Falls Short

This is the question that generates the most heat and the least precision. The honest answer is: it depends entirely on what you mean by “replace,” and which specific task you are asking about.

Where AI outperforms humans:

AI processes information at a speed and scale that humans cannot match. It can review ten thousand contracts for a specific clause, score a million transactions for fraud risk, or generate fifty variations of a product description – all before a human analyst has opened a single file. Consistency is another genuine advantage: AI applies the same rules every time, without fatigue, distraction, or mood. For repetitive, high-volume, pattern-recognition tasks, AI is faster, cheaper, and often more accurate.

What humans do better:

The limitation of AI is that it lacks true understanding, emotional intelligence, and adaptability. It depends heavily on data quality and human oversight, and it cannot replace human judgment in complex, ethical, or ambiguous situations. A credit officer can read a context that does not fit the model. A claims adjuster can recognize a legitimate exception. These are not skills that current AI systems possess – and they matter most precisely in the cases where the stakes are highest.

Creativity is worth naming specifically. AI can generate content that looks creative. If you ask it for a poem, it will write a poem. Whether it will be a good poem compared to a skilled poet is a different question. AI imitates creativity – it does not originate it.

The practical conclusion for enterprise leaders

The most effective AI deployments are not the ones that replace humans – they are the ones that redirect human attention. AI system handles the pattern-recognition layer while humans focus on the judgment layer.

Two-thirds of organizations now report productivity and efficiency gains from AI, but only 20% are seeing revenue growth – suggesting that most enterprises have successfully used AI to do the same things faster, without yet rethinking what they should be doing differently.

4. EU AI Act Compliance: What Enterprise Teams Need to Know

The EU AI Act is the world’s first comprehensive AI regulation. It is actively enforced – every enterprise deploying AI systems that affect EU users needs to understand where those systems sit in the regulatory framework.

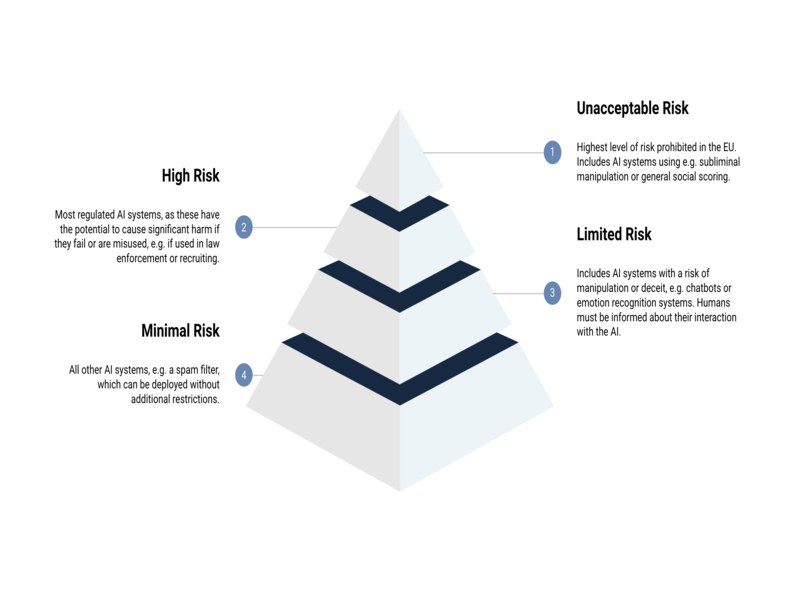

The four risk tiers:

Prohibited – outright banned. Social scoring systems that evaluate individuals based on behavior. Real-time remote biometric identification in public spaces, with very narrow law enforcement exceptions. These prohibitions have been in force since February 2025.

High-risk – full compliance required. AI systems in financial services – including credit scoring, insurance pricing, and biometric identification – are classified as high-risk. So are AI systems used in hiring, critical infrastructure management, and law enforcement. High-risk systems require documented risk management frameworks, human oversight mechanisms, explainability to regulators, and audit trails.

Limited-risk – transparency obligations only. Chatbots must disclose that users are talking to AI. AI-generated content must be labeled. These requirements are simple but enforced.

Minimal-risk – no additional obligations beyond existing EU law.

Keep in mind:

A study of 106 enterprise AI systems found 18% were high-risk, 42% low-risk, and 40% unclear on risk classification. That 40% unclear is where most organizations are sitting right now. Uncertainty is not a defense when a regulator asks.

Key compliance timeline:

- Prohibited practices: ceased February 2025.

- General-purpose AI governance: August 2025.

- High-risk financial AI: August 2026

- All remaining provisions: August 2027.

The Act’s global reach means any AI provider or deployer whose systems affect users in the EU must comply, regardless of where they are incorporated. A US bank with EU retail clients, a software company selling AI tools to German insurers, a UK wealth manager with EU policyholders – all in scope.

5. Frequently Asked Questions About Enterprise AI

What is the simplest definition of enterprise AI?

Enterprise AI is artificial intelligence deployed inside an organization to improve business outcomes – automating decisions, generating content, detecting patterns, or taking autonomous action. Unlike consumer AI tools, it integrates into business processes, connects to organizational data, and operates in regulatory contexts that consumer tools do not face. It covers a wide range of technologies, from statistical models that have existed for decades to agentic systems only now entering production use.

What are the main types of enterprise AI?

Enterprise AI falls into five main capability groups: AI that predicts (predictive analytics, machine learning, reinforcement learning), AI that creates (generative AI, NLP), AI that talks (conversational AI and chatbots), AI that sees (computer vision and document processing), and AI that acts (autonomous and agentic AI). Most modern enterprise deployments combine more than one of these – a fraud detection platform might use predictive ML to score risk and conversational AI to communicate alerts.

Which AI types are classified as high-risk under the EU AI Act?

The Act classifies by use case, not by technology. AI used in credit scoring, insurance pricing, biometric identification, employment decisions, education assessments, and critical infrastructure management is classified as high-risk – regardless of which underlying technology powers it. An ML model in credit decisions, computer vision in identity verification, and an agentic workflow managing financial operations can all land in the high-risk tier, triggering documentation, oversight, and audit trail requirements.

What should an enterprise do first to assess its AI compliance posture?

Start with an inventory. List every AI system in use across the organization, including tools embedded in purchased software from third-party vendors. For each system, identify its use case and map it against the EU AI Act’s four risk tiers. This exercise tends to resolve the 40% of “unclear” systems quickly, because most become clear once use-case mapping is applied. Given the August 2026 deadline for high-risk financial AI, any institution that has not done this is already on compressed timelines.

6. Conclusion

AI is not one technology making one kind of decision. It is a family of tools – each suited to different problems, each creating different risks, each sitting in a different place in the regulatory landscape. The enterprises that navigate this well are the ones that have moved past the general category of “AI” and can say, clearly, what they have deployed, what it does, who is accountable for it, and how it will be explained to a regulator if asked.

Twice as many enterprise leaders as last year report transformative business impact from AI – but just 34% say they are truly reimagining how the business operates. The gap between “using AI” and “governing AI well” is where most organizations sit in 2026. Closing that gap starts with knowing what you actually have.

IMT Solutions has spent 17 years building AI systems for BFSI and enterprise clients – systems where the technical build and the compliance layer are designed together from day one, not treated as separate workstreams. If you are mapping your AI landscape or planning your next deployment, explore our case studies or contact IMT Solutions to talk through how we approach it.